There is a character in Kim Stanley Robinson’s Mars Trilogy who I think about occasionally. His name is Sax Russell. He is a physicist, one of the first hundred scientists sent to colonize Mars, and he spends the early part of the trilogy absolutely certain that his work is apolitical. Science, in Sax’s view, is about understanding and manipulating the physical world. It operates on evidence, method, and rigor. Politics, emotions, competing values, these are distractions from the real work of making Mars habitable. If you just do the science correctly, Sax believes, the outcomes will speak for themselves.

He is wrong. It takes him about a century to fully understand how wrong he is, and watching that understanding develop across three novels and two thousand pages is one of the most useful things I have read for thinking about what we actually do when we implement educational technology.

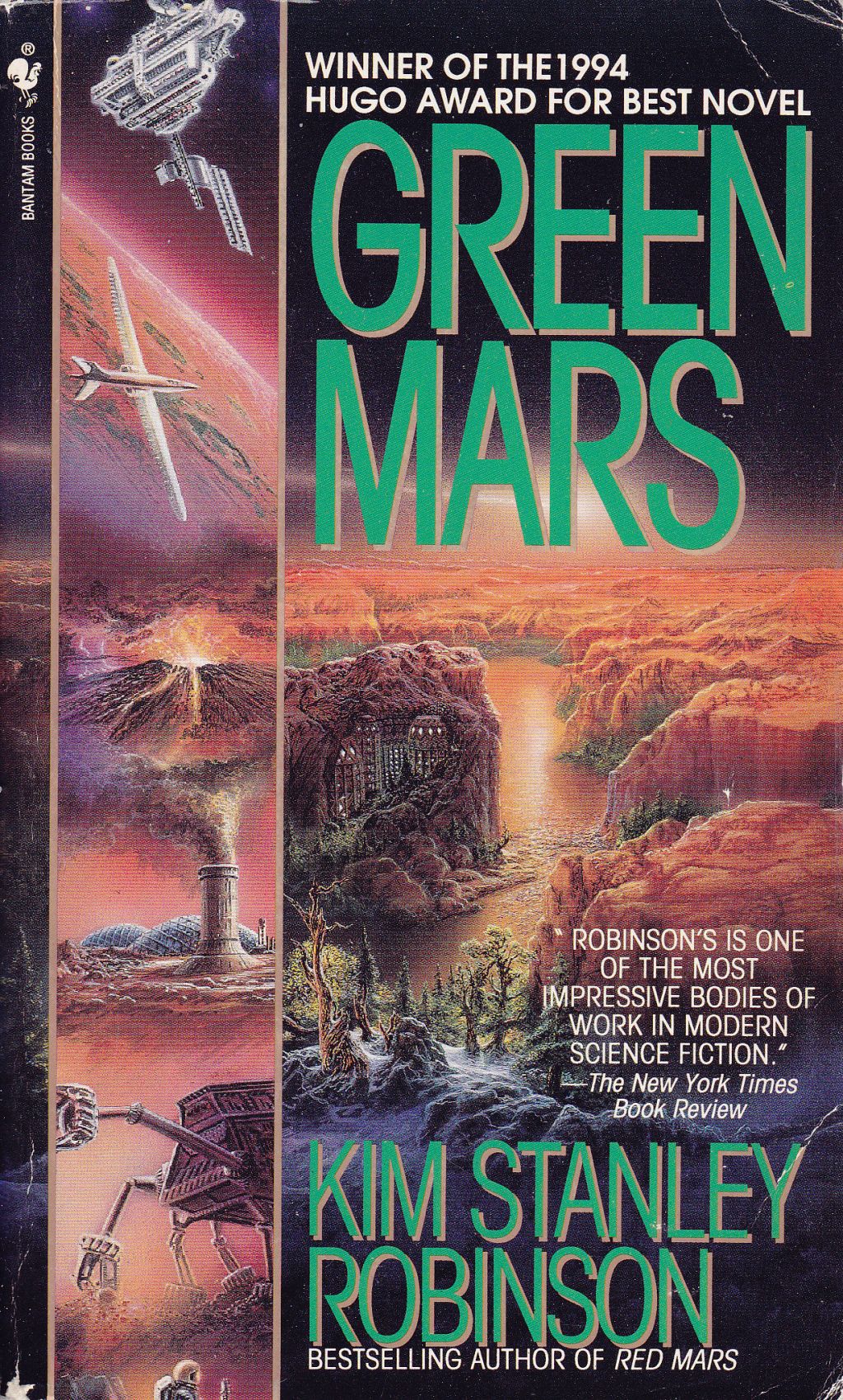

Robinson’s Mars Trilogy (Red Mars, Green Mars, Blue Mars, published across the 1990s) follows the colonization and transformation of Mars over roughly two hundred years. It is dense, deliberate science fiction, full of geological descriptions and committee meetings and long arguments about atmospheric chemistry. It is also, underneath all of that, a sustained argument that technical decisions are always ethical decisions, that every system we build encodes values whether we intend it to or not, and that the people who build those systems have a responsibility to understand what they are encoding.

If you have not read the books, that is fine. I will give you enough of Sax’s story to follow the argument. But the reason I keep coming back to this particular piece of fiction is that Robinson does something rare: he shows, in granular detail, what it looks like when a brilliant technical person gradually realizes that their work was never as neutral as they believed.

The Technocrat’s Blind Spot

When Sax arrives on Mars, he is already one of the most respected scientists on the mission. He is brilliant, methodical, and deeply committed to the project of terraforming, the long process of transforming Mars’s atmosphere, temperature, and surface conditions to support human life without pressure suits. For Sax, this is an engineering challenge with an engineering solution. You study the variables, you design interventions (greenhouse gas cocktails, orbital mirrors, genetically engineered organisms), you measure the results, and you adjust. The scientific method, applied at planetary scale.

What Sax does not initially see is that every terraforming decision is also a decision about values. How fast should the atmosphere change? That is not just a question about chemistry. It is a question about who benefits first, who bears the risks, and whose vision of Mars’s future gets prioritized. The “Greens,” Sax’s faction, want aggressive terraforming because they believe a habitable Mars is better than a dead one. The “Reds,” led by geologist Ann Clayborne, argue that Mars’s original landscape has intrinsic value that should not be destroyed for human convenience. Between these poles, every technical parameter, every parts-per-million target, every species introduction, carries embedded assumptions about what matters and who gets to decide.

Robinson does not make this a simple morality play. Sax is not a villain. He is a person who genuinely believes he is doing good work, and in many ways he is. The problem is not his intentions. The problem is his framework. He has a mental model in which technical excellence and ethical responsibility are separate domains, and that separation allows him to make consequential decisions without examining their full implications.

This is a framework I encounter regularly in EdTech implementation. It shows up every time a conversation about platform selection focuses exclusively on features and integration capabilities without asking what the platform’s data practices assume about student privacy. It shows up when a discussion about assessment design centers on psychometric validity without examining what the assessment’s structure rewards and what it renders invisible. It shows up when a district adopts a “personalized learning” system without interrogating whose definition of personalization is baked into the algorithm, or what model of learning the algorithm’s recommendations are optimized around.

The technical questions are real and they matter. I am not suggesting we ignore them. But Sax’s story is a reminder that treating technical questions as if they exist in a separate category from ethical questions is itself an ethical choice, and it is one that tends to benefit the people who already have power over how systems get designed.

What Breaks the Framework Open

Sax’s transformation does not happen through argument or persuasion. It happens through damage.

During the first Martian revolution in 2061, Sax is captured by Earth security forces and tortured. He suffers brain damage that fundamentally alters his capacity for language and social connection. In the aftermath, he has to relearn how to communicate, how to read social cues, how to navigate relationships that previously seemed irrelevant to his work. Robinson writes this not as a punishment but as a forced expansion of perception. Sax’s injury does not make him less intelligent. It makes him differently intelligent. The parts of human experience that his previous cognitive framework filtered out as noise become, for the first time, legible to him.

What follows across the second and third books is a slow, uneven process of integration. Sax does not abandon science. He does not become a politician or an activist. He remains, at his core, a person who wants to understand and shape the physical world. But his understanding of what “the physical world” includes expands to encompass the social systems that determine how scientific knowledge gets used. He begins to see that the terraforming debate was never just about atmospheric chemistry. It was about competing visions of what a good society looks like, and chemistry was the medium through which those visions were enacted.

He falls in love with Ann Clayborne, his ideological opposite, which Robinson uses to dramatize the idea that genuine understanding requires engaging with perspectives that challenge your foundational assumptions rather than dismissing them as outside your domain. Sax does not come to agree with Ann. But he comes to understand that her objections to terraforming are not anti-scientific. They emerge from a different set of values applied to the same evidence, and a purely technical framework has no way to adjudicate between them.

I do not have a brain injury story to offer as a parallel. But I do have the slower, less dramatic version of the same realization that comes from years of implementation work. The moment you realize that the LMS configuration you set up privileges certain kinds of participation over others, and that this is not a bug you can fix with a settings change but a reflection of assumptions built into the platform’s architecture. The moment a district’s data dashboard makes certain student outcomes hyper-visible while rendering others structurally invisible, and you understand that the dashboard is not just reporting reality but shaping what counts as reality for the people who use it. The moment you recognize that “we chose this tool because it integrates well with our existing systems” is also a statement about which existing systems you have decided not to question.

The Hidden Curriculum of Systems

There is a concept in critical pedagogy that Robinson’s trilogy illustrates with unusual clarity, even though he never uses the term. It is the idea of the hidden curriculum: the lessons a system teaches through its structure, incentives, and defaults, regardless of what it officially claims to teach. Philip Jackson coined the term in 1968, and thinkers like Paulo Freire, Henry Giroux, and Michael Apple have developed it in different directions since then. The core insight is that educational systems do not just deliver content. They model relationships to authority, define what counts as legitimate knowledge, sort people into categories, and normalize particular ways of being in the world. They do this whether anyone designs them to or not.

On Robinson’s Mars, the hidden curriculum of the terraforming project is visible at planetary scale. The Greens’ approach to atmospheric transformation does not just change the air. It creates a world optimized for a particular kind of human life, one that resembles Earth. That optimization makes Mars more hospitable for Earth-born immigrants, which accelerates immigration, which shifts the political balance, which threatens the autonomy of the people who built the society in the first place. The technical parameters of terraforming, parameters that Sax originally treated as pure engineering, turn out to encode a political program. Making Mars more Earth-like makes it easier for Earth to control.

The parallel to educational technology is not subtle. Every system we implement in a district has a hidden curriculum. An LMS that organizes content into linear modules teaches students that learning is sequential, even if we believe it is not. A gradebook that weights summative assessments at 80% teaches students (and teachers) that performance on demand matters more than growth over time, regardless of what our assessment philosophy document says. A “personalized learning” platform that generates recommendations based on standardized performance data teaches students that their learning path should be determined by algorithmic interpretation of their deficits, not by their interests, contexts, or self-knowledge.

None of these are inevitable. They are design choices, and they can be made differently. But they can only be made differently if the people implementing the systems recognize them as choices in the first place, rather than treating them as technical defaults that simply came with the package.

This is where Sax’s arc is most instructive. His blind spot was not ignorance. It was category confusion. He placed ethical questions in a separate bucket from technical questions, and because he was brilliant at the technical work, he never had to confront what was in the other bucket until it was forced on him. The equivalent in our field is treating platform selection, system configuration, data architecture, and integration design as technical problems with technical solutions, when they are actually design problems with embedded values that shape outcomes for real people.

What Robinson Gets Right About Expertise

One of the things Robinson does throughout the trilogy that I find particularly relevant is his treatment of how expertise functions in a community. The First Hundred are all experts. They are scientists, engineers, psychologists, and ecologists selected from the best in their fields. And yet their expertise, applied without attention to context and values, repeatedly produces outcomes that none of them intended.

The longevity treatments are the clearest example. Martian scientists develop a therapy that dramatically extends human lifespan. This is, from a technical standpoint, an extraordinary achievement. But the decision about who gets access to the treatment, and what political consequences follow from that decision, cannot be answered by the science that produced it. On Mars, the treatment is distributed broadly. On Earth, it becomes a source of massive inequality, accelerating the tensions that eventually lead to world war. The same technical achievement produces liberation in one context and catastrophe in another, depending entirely on the social and political systems that govern its distribution.

Robinson is not arguing against expertise. He is arguing against expertise that does not account for its own context. Sax is a better scientist by the end of the trilogy, not a worse one, because he has expanded his understanding of what his science affects. His technical knowledge does not decrease. His awareness of what that knowledge connects to increases.

This framing has changed how I think about my own role. When I help a district implement a system, my technical expertise (platform administration, API integrations, configuration design, training development) is necessary. It is the reason I am in the room. But it is not sufficient, and if I treat it as sufficient, I am making the same category error Sax makes. The technical implementation is always also a values implementation. The question is whether I am paying attention to both layers or only the one I was trained to see.

Toward a More Honest Practice

I want to be careful here not to overstate the lesson. Robinson’s Sax Russell has the luxury of two hundred years and a planetary-scale laboratory to work through his understanding. We have quarterly reviews and limited budgets. I am not arguing that every platform configuration meeting needs to become a seminar on critical pedagogy. I am arguing that the separation between “technical work” and “values work” is artificial, and that maintaining it makes us worse at both.

In practice, what this looks like for me is a set of questions I try to bring into implementation conversations alongside the technical ones. Not in place of them. Alongside them. Questions like: What does this system make easy to see, and what does it make hard to see? Whose workflow does this design optimize for, and whose does it complicate? What assumptions about learning are built into this platform’s defaults, and do those assumptions match what we actually believe about how people learn? If a student or teacher could see the logic behind this system’s recommendations, would they recognize their own experience in it?

These are not questions with clean answers. They are questions that keep the ethical dimension of technical work visible, which is all I think we can reasonably ask of ourselves. Robinson does not give Sax a formula for integrating technical and ethical reasoning. He gives him a gradually expanding awareness that the two were never separate in the first place.

The Martian constitution that eventually emerges from the trilogy’s long political process reflects this integration. Its environmental court has the authority to evaluate laws not just on procedural or economic grounds but on ecological ones. Technical decisions about terraforming have to pass through a framework that explicitly accounts for values. The system is not perfect. Robinson shows it being gamed, challenged, and revised throughout the third book. But it represents an institutional commitment to the principle that technical and ethical questions belong in the same conversation, evaluated by the same process, subject to the same accountability.

We do not have environmental courts for educational technology. What we have is our own professional judgment, applied in the rooms where implementation decisions get made. Robinson’s trilogy has made me more attentive to what is in those rooms beyond the technical requirements, and more willing to name it when I see it. That is not a dramatic transformation. It is more like Sax’s slow expansion of perception, an ongoing process of noticing what was always there but did not fit the original framework.

If you are doing similar work, helping districts navigate EdTech decisions that are always partly technical and partly something else, I would like to hear how you think about the relationship between those two layers. You can reach me at licht.education@gmail.com, and you can find more of my writing on education, technology, and design at bradylicht.com.